Slimecore

Treating data as a product, not a report. Owning analytics end-to-end for a live-service multiplayer game.

Intro

Slimecore was a live-service 3v3 round-based hero shooter being built at Genpop Interactive, a small independent studio of around 20–25 people. I was the sole analyst, with no team above or below me. That meant owning the full analytics function: internal tooling for designers and engineers, executive reporting for leadership and publishing partners, and the first player-facing data products the studio ever shipped.

The centerpiece of my tenure was a prototype-turned-production web app that went out to players after every match — work that had to be fast, legible, and correct in a way internal dashboards don't quite demand. Genpop closed in September 2025 when a funding partnership fell through. Everything on this page shipped before then.

Analytics Foundation

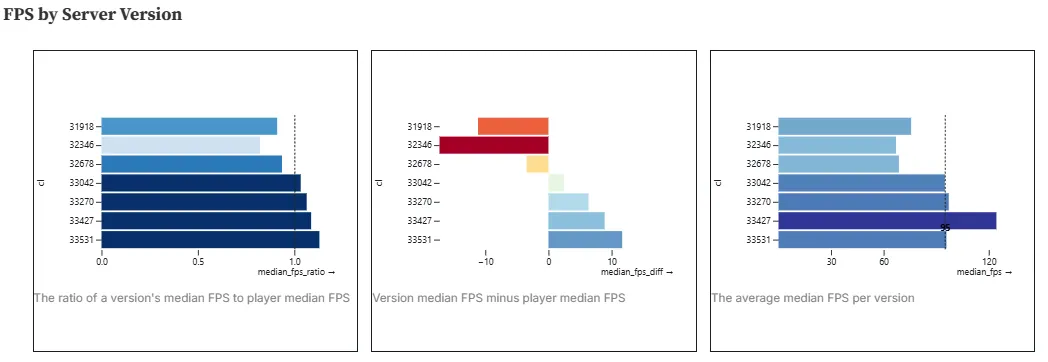

The internal analytics layer I inherited and expanded on was a suite of interconnected Observable Notebooks — living dashboards that pulled from in-game data within minutes of match completion. With dev playtests running daily, the design and engineering teams used them as the primary self-serve source for game data and insights. PlaytestView was the per-match deep-dive: damage breakdowns, FPS percentiles by map, augment usage, economy stats. Match Summary was the lighter version, for quick post-playtest checks. Multi-Match Summary (MMS), which I built from scratch in my second month — originally to track stats across a LAN with friends — was the first tool to aggregate across a session. Designers were requesting summaries from it within days of launch and it became a core tool until I build the Aggregate Data Analytics (ADA) notebook.

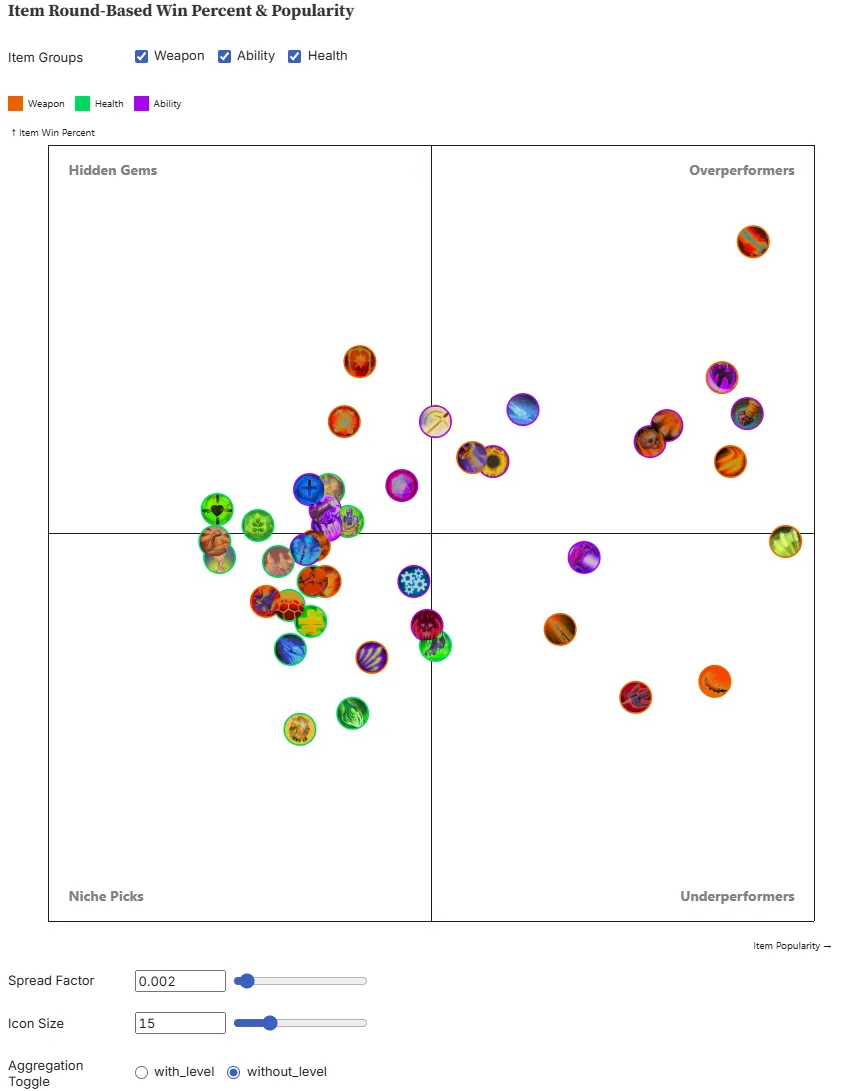

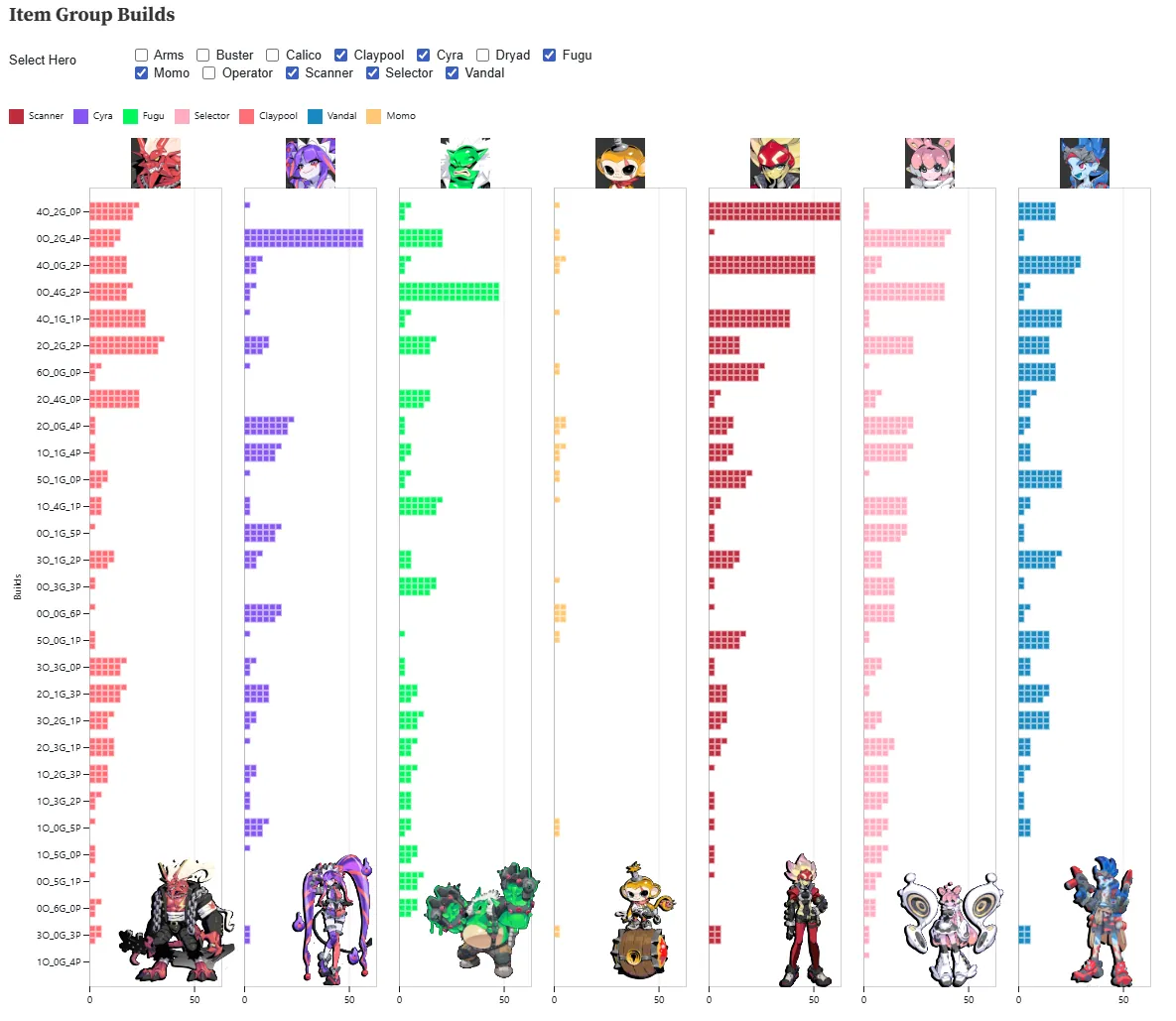

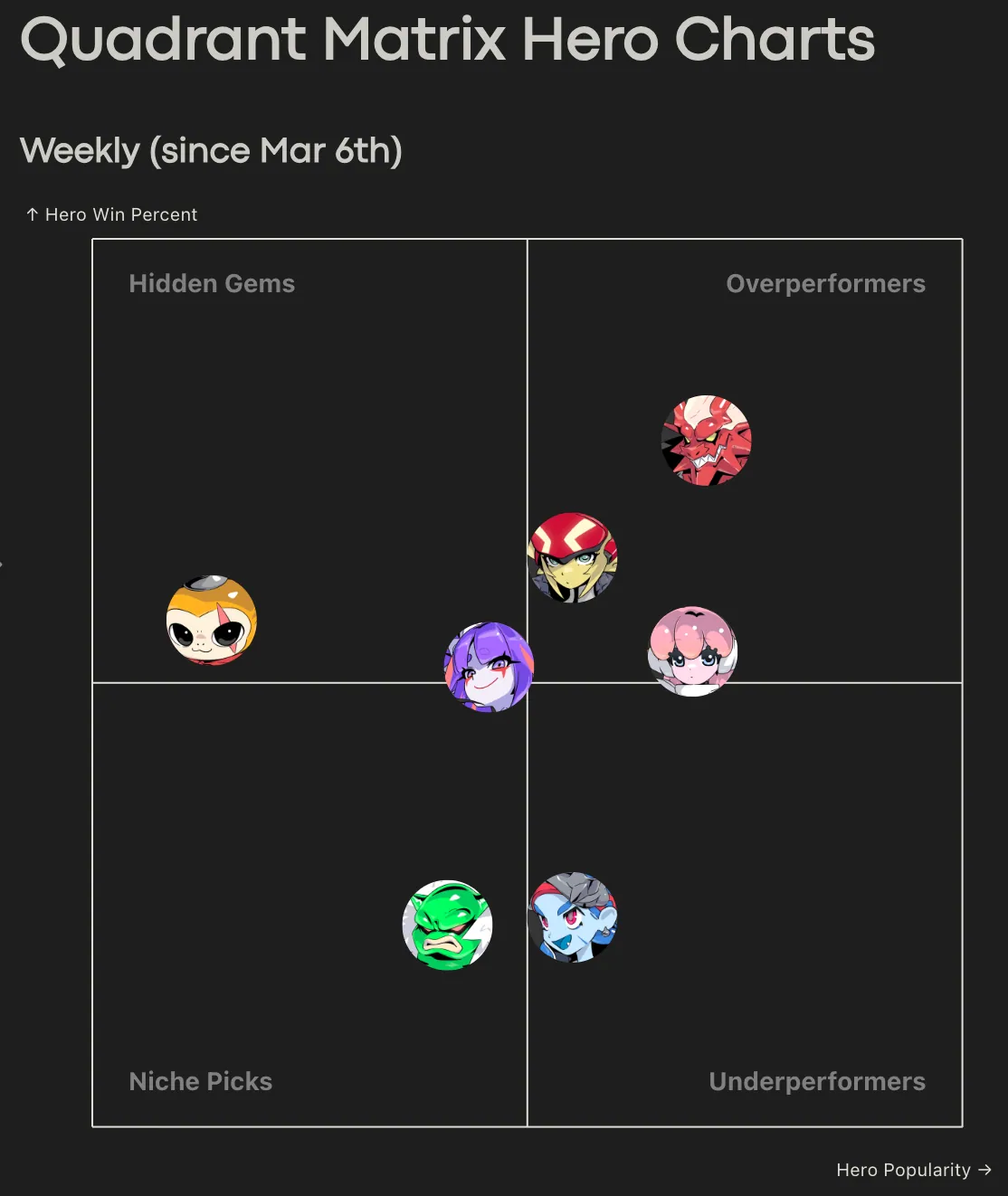

The ADA notebook was the most ambitious of the four: a filter-driven view across the game's full match history, later redesigned to scale from a few hundred matches to thousands. One of it's headline outputs was a four-quadrant matrix chart that categorized every in-game item and hero — against whatever filter set the user picked — as a Hidden Gem, Niche Pick, Overperformer, or Underperformer.

Performance work mattered across all of these. Consolidating PlaytestView's seven core Databricks queries into a single DuckDB query cut load times by 11–26% across network throttle settings. Converting MMS to DuckDB shaved another ~30% on a 70-match collection.

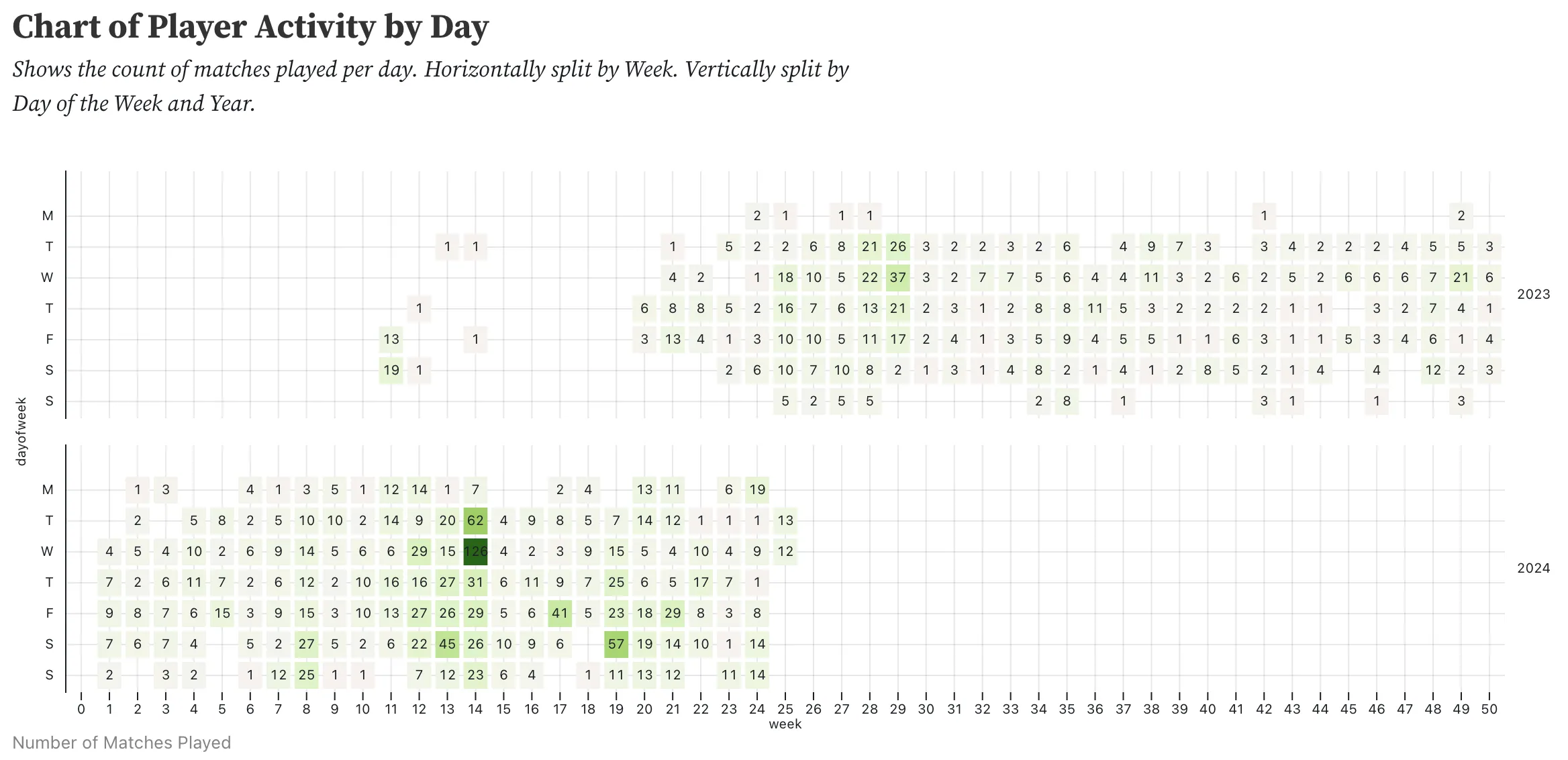

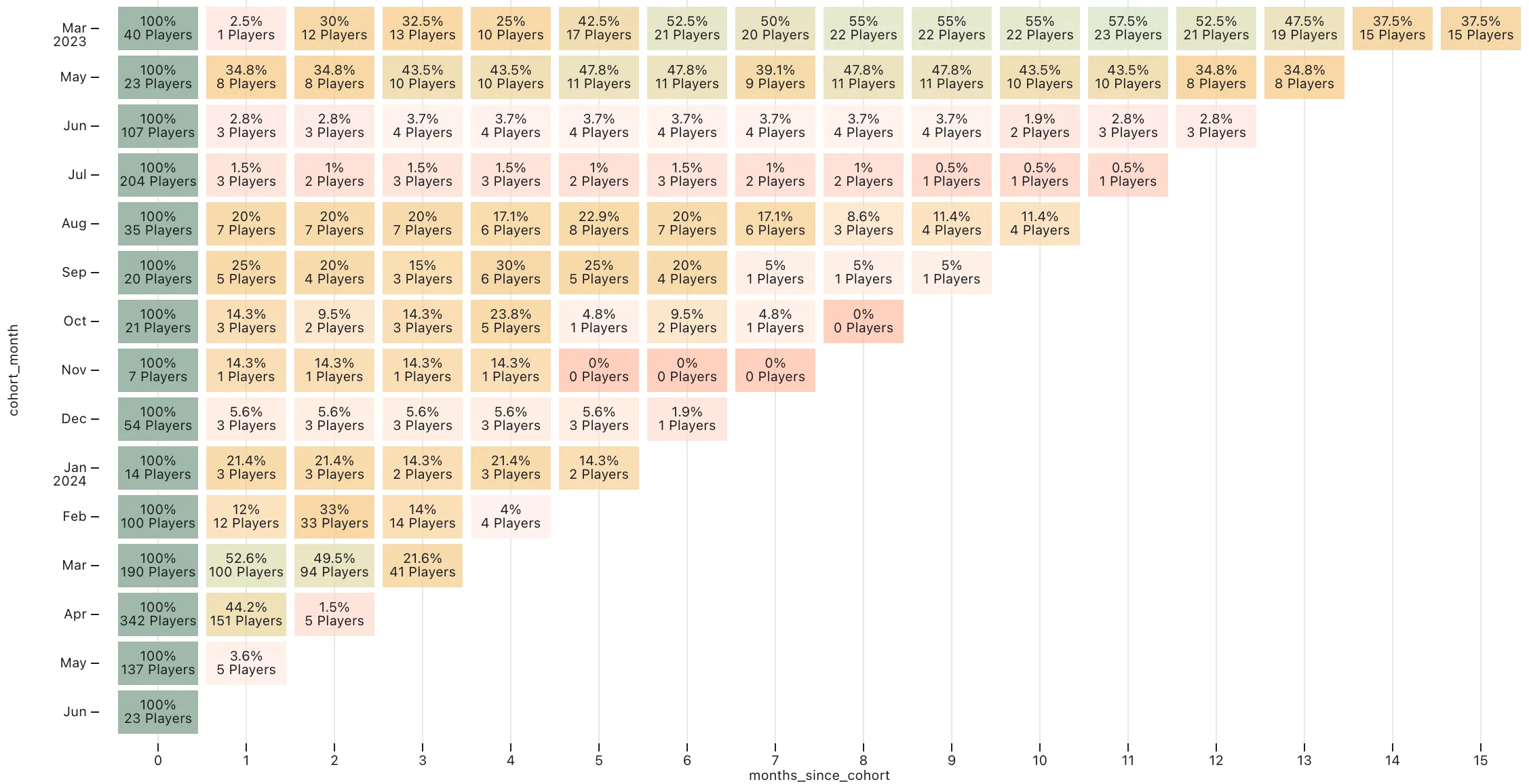

One piece of internal work reached further than the rest. I built a set of cohort-based retention charts — a GitHub-contribution-graph-style heatmap covering every playtest from early 2023 onward, and a monthly retention triangle — that the COO used in publishing-partner meetings. The SQL underneath was some of the most involved I wrote at Genpop, unioning live Databricks tables with historical backup data so the timeline didn't break across the studio's various data migrations.

The executive-facing layer was simpler in form but high-stakes. I produced data summary documents in Figma — match volume, performance distributions, retention, balance metrics — scoped to specific playtest sessions and presented to external publishing partners. Fast turnaround, built for a non-technical audience deciding whether to fund the studio.

End of Match Stats Page — Centerpiece

The headline project of my tenure was the end-of-match stats page (EoM) — a player-facing web app that generated a unique URL for every player after every match, showing what they did and how the match went. It built on the analytics foundation I'd been expanding in the internal notebooks.

Internally it was referred to as Core– (read: "core minus"), a deliberately scaled-back version of an earlier vision called Core+: a subscription analytics product modeled on third-party stats sites like op.gg or Blitz.gg, built with the advantage of internal data access. The original plan was to outsource the web foundation and have me build the game-specific analytics layer on top. When budget pressure scrapped the outsourcing piece, I ended up as the primary developer of the whole thing — a role I wasn't originally hired for.

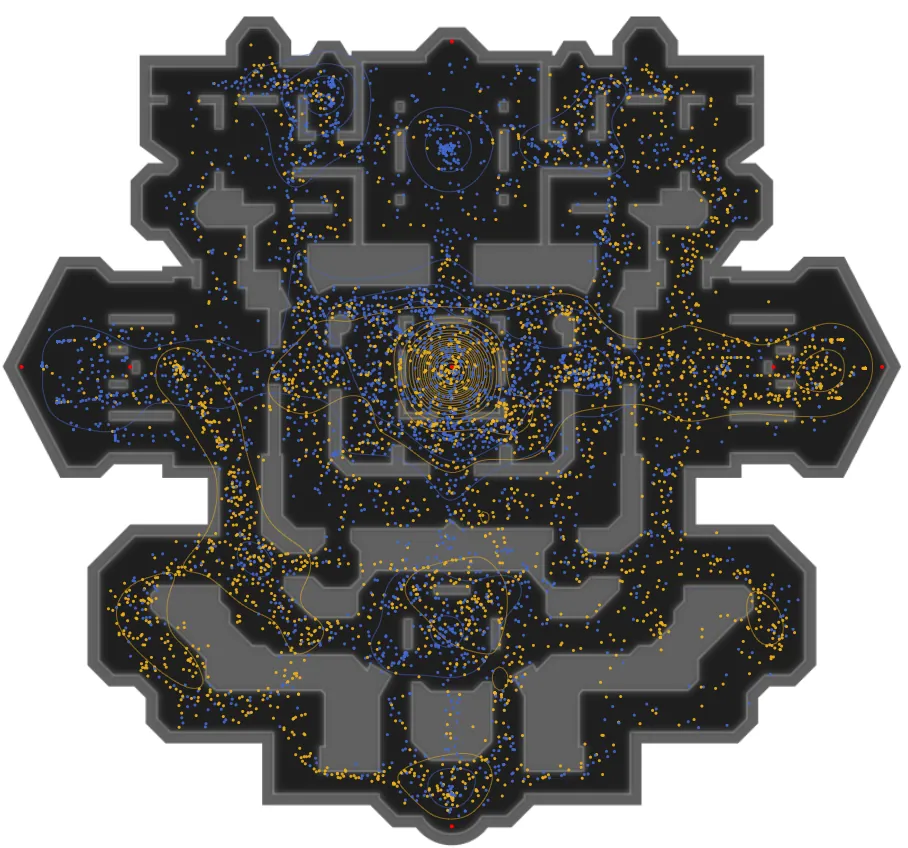

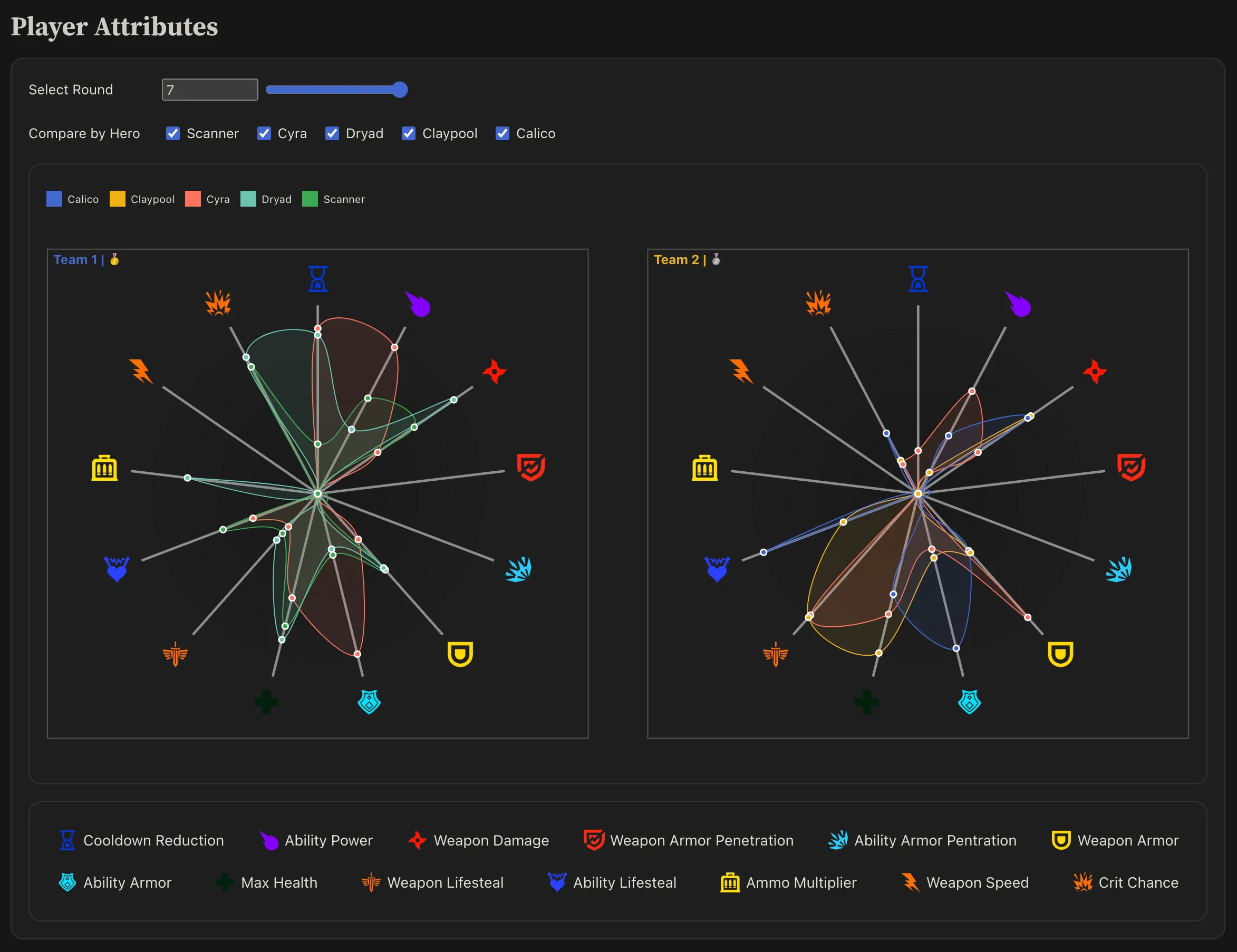

The page went live for external playtests at the end of January 2025 and was the first player-facing data product the studio had ever shipped. Architecturally, queries ran on Databricks via Spark SQL, were pre-computed and cached as Parquet files on S3, served through CloudFront, and queried in-browser with DuckDB inside Observable Framework. An AWS Lambda, triggered on S3 upload, kept the pipeline current. URLs were keyed on match ID and player ID, so every player got their own deep view of every match: per-second damage and healing with event flags for kills and objective captures, radar charts for player stat breakdowns, gold efficiency by round, augment and item history. Syndicate — the studio's specialized internal playtest group of semi-professional MOBA and FPS players — used it after every session, and the feedback was good. It was compared favourably to Deadlock's post-match stats page.

By March, with a high-stakes external investor playtest on the calendar, the load time had become the problem. The first version had been built as a prototype, not designed to scale, and was sitting at 30 to 60 seconds — too slow for the investor demo, and slow enough that any player or dev who wasn't data-hungry enough wouldn't wait for it to load. I redesigned it over a single long weekend, Thursday through Monday, twelve-to-sixteen hour days, rebuilding the data pipeline, SQL queries, and page architecture. Load time dropped to 1 to 2 seconds. The rebuild held up through the investor playtest and every playtest after it, and player usage climbed sharply.

What stuck with me about the project, beyond the technical work, was what it changed about the shape of my role. I was hired as the analytics layer on top of someone else's web foundation; by mid-2025 I was the person making the call on whether to migrate the whole thing from Observable Framework to Nuxt.js, with architectural prototyping already underway when the studio closed. My promotion to Data Analyst came in June, and the trajectory at closure was toward principal-level scope. Most of the data work I'd done before Genpop had ended in a Tableau dashboard or a slide. This one ended in a URL that landed in players' hands.

Leaderboard

The leaderboard was the studio's first player-facing data product and the project that got Observable Framework into the stack. Discussions began in late September 2024, and it went live the next month for a public tournament. That deadline mattered: shipping for an external event meant the architectural and pipeline decisions had to hold up under real player traffic, not just internal review. A lot of what later made the EoM page possible — the AWS Lambda data pipeline, the Observable Framework deployment, the patterns for keeping cached data fresh — was first proven out here.

I kept extending it through the rest of my tenure, adding a developer-account toggle so external viewers could filter dev playtest data out of competitive standings. In early 2025 I rebuilt a faster beta version that became the performance template for the EoM page. Smaller in scope than EoM, but it shipped earlier and stayed in production through closure.

Reflection

Post-closure, what's stayed with me is how much shipping data to players changed the work itself. An internal notebook can run slow and still be useful; a public page can't. An internal chart can be confusing if the right person is in the room to explain it; a player-facing one can't. Working under those constraints for sixteen months changed what I think good analytics work looks like — less about producing the right answer, more about producing it in a form that survives contact with the people who actually need it.